“Does this system have access?” Runtime Risk: Why Traditional Security Fails Business-Built AI

As AI agents and citizen-developers applications proliferate across the enterprise, security teams are confronting a problem that looks familiar but behaves entirely differently. What used to be developer-led software now emerges from every corner of the business, created by marketing teams, finance, operations, and support, and executes with legitimate access to data and systems of record.

Much of this activity falls into what many now call shadow AI: systems built with approved tools but without centralized security oversight. At the same time, organizations are racing to formalize citizen development governance and broader responsible AI governance programs. Yet governance frameworks alone do not address what happens once these systems are live.

These applications rarely go through the same scrutiny or runtime defenses as traditional software.

This isn’t hypothetical. It’s the security challenge many enterprises face as adaptive systems run in production without the controls built for them.

What Makes AI Agents Different

Traditional automation is predictable: fixed workflows, defined triggers, repeatable outcomes. AI agents are not.

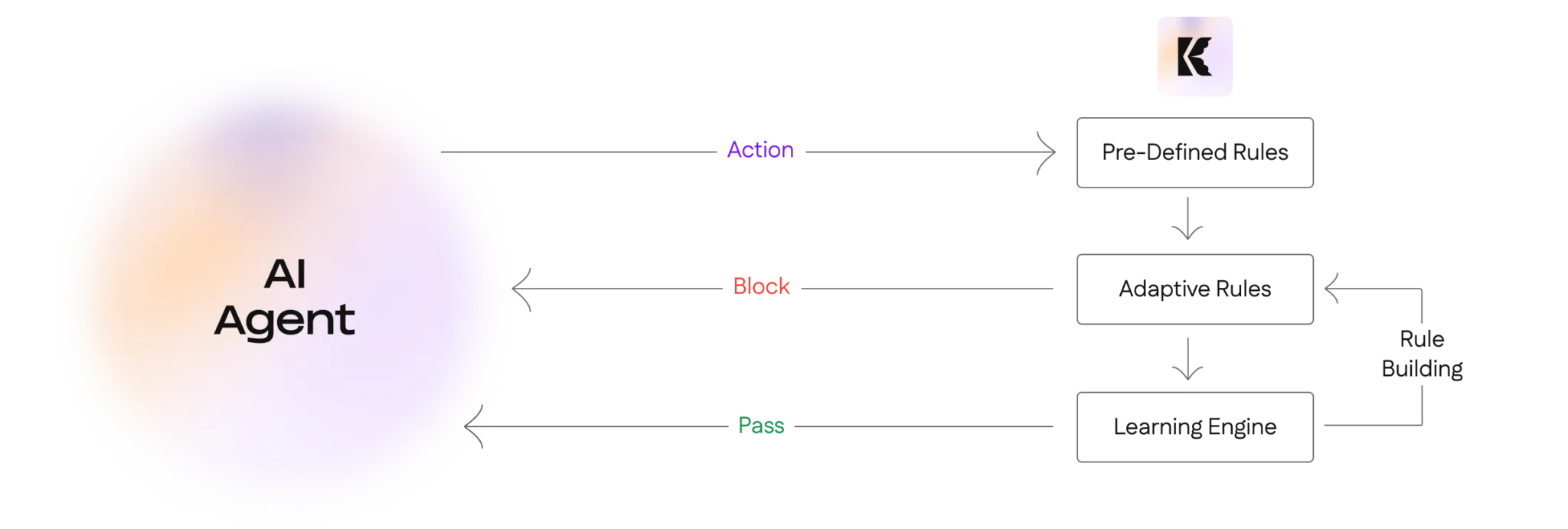

An AI agent is given a goal, not a script. It evaluates context, selects actions, and behaves dynamically based on live data and available tools. That means risk doesn’t reside in a static configuration file or access control list. It materializes in production, in real time.

Consider a simple internal assistant built on a no-code platform and connected through approved connectors. A team member with limited privileges interacts with it. Through conversational input alone, the agent retrieves and synthesizes information the employee shouldn’t directly access, all while operating within granted permissions.

No access control violation occurs. No policy is technically broken.

The agent simply behaves in a way no one anticipated.

This is how shadow AI quietly becomes operational infrastructure, not through malicious intent, but through emergent behavior. Risk is not access; it’s use.

Why Prevention-First Security Doesn’t Scale

Most organizations still approach AI risk the same way they approach classic software risk:

- Pre-deployment reviews

- Access control enumeration

- Manual approval gates

- Periodic audits

These controls assume:

- Systems are centrally owned

- Behavior is relatively static

- Risk can be modeled before deployment

AI agents break every assumption.

Workflows change weekly. Agents adapt. Integrations evolve. Permissions that were acceptable yesterday may be risky today, not because they were misconfigured, but because context shifted.

This is where many citizen development governance initiatives stall. Policies may define who can build and what platforms are approved, but they rarely account for how behavior evolves once systems are deployed.

In adaptive environments, pre-deployment inspection isn’t enough. Runtime is where risk actually shows up.

From Permissions to Contextual Governance

Security teams have historically asked:

Modern risk requires asking:

“Is this action appropriate right now, in this context?”

AI agents frequently require broad permissions to function. Permission alone provides no signal about whether that access will be misused at a specific moment. What matters is whether the agent’s actions align with declared purpose, organizational policy, and expected operational patterns.

This is where responsible AI governance must extend beyond ethical guidelines and documentation. Governance cannot stop at design principles. It must include enforcement mechanisms that evaluate behavior as it unfolds.

Context is no longer optional. It is foundational.

Behavioral Baselines: The Foundation of Runtime Control

One promising practice emerging in advanced environments is the use of behavioral baselines.

Instead of trying to enumerate every acceptable action in advance, security teams:

- Observe normal activity over time

- Understand typical data accessed

- Track common interaction patterns

- Note expected execution timing and frequency

Under this model, risk is defined by deviation, not entitlement. When an agent begins acting outside its baseline, security can intervene before harm occurs.

This approach bridges the gap between governance intent and operational reality. It’s how adaptive security must function when behavior is dynamic and emergent.

Enforcement at Runtime Without Disruption

Securing adaptive systems means acting where risk appears: in production. Static checks before deployment miss behaviors that only emerge once systems interact with users, data, and other tools.

But runtime protection doesn’t require shutting down innovation. Effective controls operate with precision:

- Pausing specific high-risk queries

- Requiring step-up validation for unexpected actions

- Blocking anomalous outbound communication

- Alerting owners with actionable context

This enables organizations to enforce policy without undermining productivity, a balance that both citizen development governance and responsible AI governance ultimately seek to achieve.

The Strategic Shift: Continuous Assurance

The old model: define, approve, review periodically, is ending. Risk no longer lives in gates. It lives in motion.

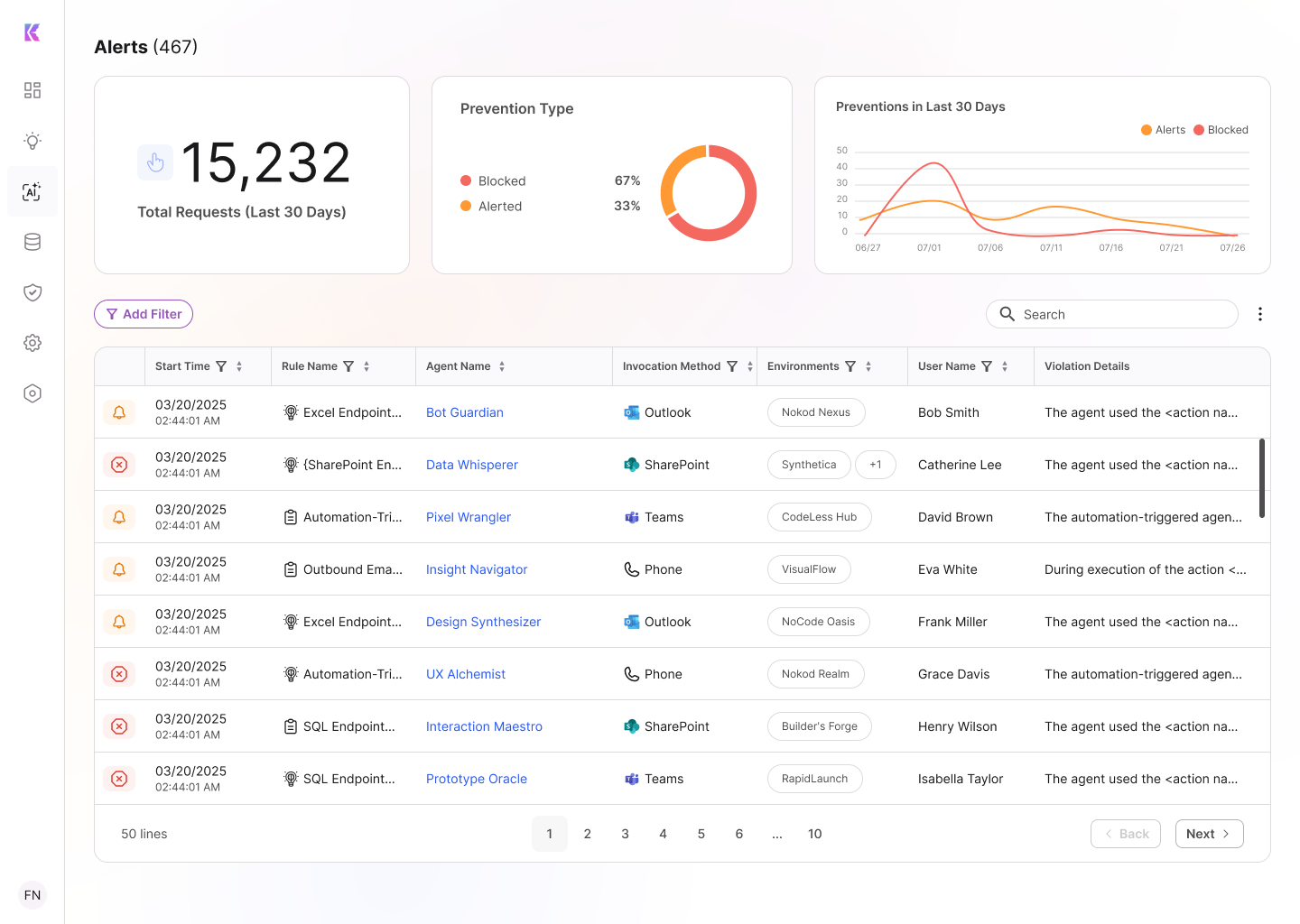

Security must evolve from static policy enforcement to continuous assurance: discovery, governance, and protection that operate while systems run.

That means:

- Continuously discovering business-built AI systems

- Governing through enforceable, technical boundaries

- Protecting behavior at runtime

Why This Matters Now

AI agents and business-built systems are no longer edge cases. They are embedded in enterprise operations. As shadow AI expands and citizen developers accelerate innovation, governance frameworks without runtime enforcement create blind spots.

Ignoring emergent runtime risk means exposure, whether from malicious actors or unintended internal activity.

Security teams need visibility, contextual enforcement, and adaptive controls that move at the speed of the systems they protect.

Download the Full Framework

This article outlines the challenge. The full operational framework, including detailed scenarios and a structured approach to runtime protection, goes deeper.

In our whitepaper, “Runtime Protection for AI Agents and Business-Built Applications: Securing the Adaptive Future,” we explore:

- How shadow AI becomes invisible production infrastructure

- Where citizen development governance falls short

- How responsible AI governance must extend into runtime enforcement

- A four-step model: Recognize → Discover → Govern → Protect

- How adaptive guardrails reduce risk without slowing innovation